“Can’t you come up with a technology for my touch-operated cooker? It doesn’t work when my hands are wet!”

Sometimes it only takes a throw-away comment to trigger a revolution. Three photonics researchers took up the challenge and were soon able to demonstrate a simple technology that uses light rather than charged metal wires to determine the placement of a finger on the screen. Today, eight years on, the Danish-Chinese joint venture ‘O-Net WaveTouch’ is about to launch the first product to contain this particular DTU technology.

It is 2006, and Jørgen Korsgaard has just bought himself a touch-operated cooking range. It is a neat piece of equipment, but there is one minor problem: you can only switch it on and off if your hands are dry. Jørgen is a businessman, but has everyday contact with the researchers at the Department of Optics and Fluid Dynamics at Risø National Laboratory, where he has rented office space for his development company OPDI. So one day, he throws out a comment about the problem during the lunch break.

A team of researchers—Henrik Pedersen, Michael Jakobsen and Steen Hanson—take on the challenge and soon come up with a solution where light channelled into a plastic panel is activated through the touch of a finger. And it works regardless of whether the finger in question is wet or dry. This means the problem can be solved—and the idea can almost certainly be sold to cooking range manufacturers. However, the businessman quickly spots a much broader market, because the use of touchscreen telephones is on the rise, and even though such phones may not necessarily have to work under water, the optical screen solution features another major benefit: it is much cheaper to manufacture.

Light runs straight on its own

In a normal touchscreen, the upper glass layer is coated with a conductive material in the form of a grid of ultra-thin metal tracks carrying current. When a finger touches the screen, it affects some of the electrical charge and this change in the electrical field is registered by a sensor.

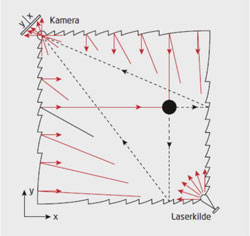

The WaveTouch screen is ‘just’ a plastic panel with small notches cut along the edge. The metal tracks have been replaced by a web of infra-red light rays which are transmitted into the screen from one corner and then run across it in straight lines on their own. When the light hits an edge, it is sent back by serrated reflectors which channel the light along a grid of vertical and horizontal rays, forming a finely meshed system of coordinates. The edges are also angled to send the rays up and down within the limits of the panel, and the rays travelling upwards are the ones that interact with the finger.

A little sensor in the opposite corner then establishes precisely where in the system of coordinates the finger has extinguished the light; the sensor can even read multiple simultaneous touches. And with one more detail—a number of small lenses that spread the laser beams with sufficient precision to ensure they cover the entire screen—you suddenly have a new, much cheaper technology for touchscreens.

The path to market

The technology was quickly described in five patents, marking the start of its long journey to the market. It turned out to be both difficult and expensive to have a plastic panel made with serrated edges in optical quality. A costly moulding tool had to be custom-built for the first demo-models, and the initial sales pitch to companies including Apple and Motorola in the United States was made without a prototype.

Nevertheless, the concept stimulated a great deal of attention, but it was the Chinese company O-Net Communications that finally purchased 40 per cent of the rights to the technology for the net sum of USD 3 million in 2013. O-Net WaveTouch was founded with Jørgen Korsgaard as CEO, and the company currently employs five people in Copenhagen and three others in Shenzhen, near Hong Kong. DTU researchers still serve as consultants on the technology.

The first prototype is a touchscreen for a watch, and work is currently underway on a GPS screen, as well as on demo screens for iPhones and iPads.

“The company is counting on launching an inexpensive 4-inch touchscreen towards the end of the year. However, a lot can happen while technology makes its way out into the real world,” says Henrik Petersen, his words backed by experience.

Article in DTUavisen no 4, April 2015.

The beams of light criss-cross the entire surface, and the camera reads where they have been interrupted by a finger.

Explanation from the researchers: The laser source (V) emits light and the built-in reflectors (C and F) distribute it across the plastic screen. When a finger touches the screen (T), it interrupts the beam of light, and the camera (D.A) translates the position to a point in a system of coordinates.